World's most flexible CRM

making the complex possible

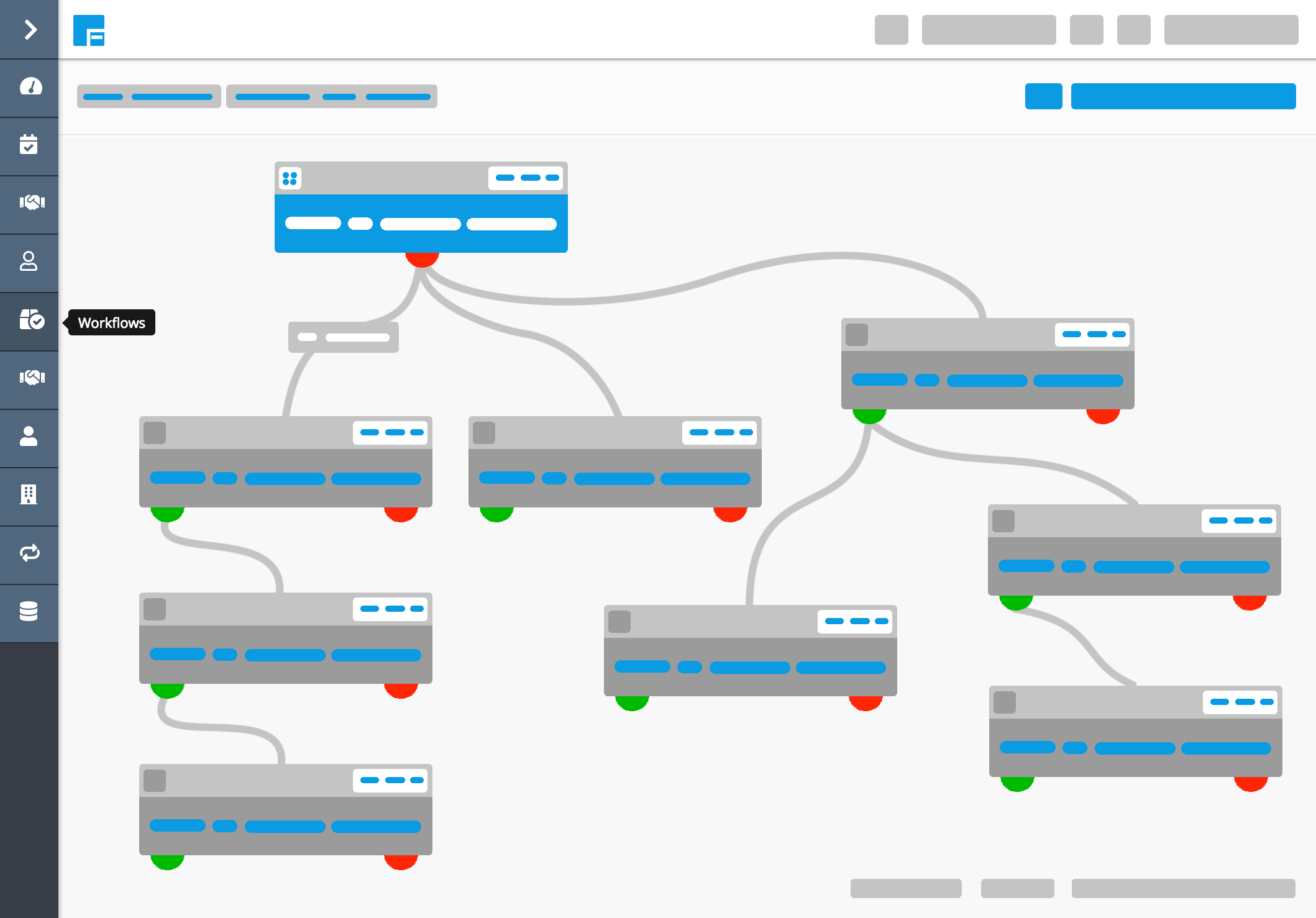

Build deep relationships with your customers by automating your communications. Shape Flexie to adapt to your business requirements, not vice-versa.

Start building your first workflow right now!